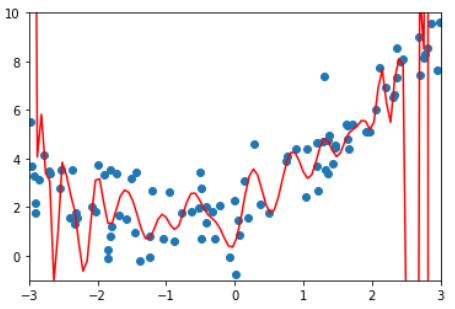

8-3 过拟合和欠拟合

准备数据

import numpy as np

import matplotlib.pyplot as plt

x = np.random.uniform(-3, 3, size=100)

X = x. reshape(-1, 1)

y = 0.5 * x**2 + x + 2 + np.random.normal(0, 1, size=100)使用线性回归

from sklearn.linear_model import LinearRegression

lin_reg = LinearRegression()

lin_reg.fit(X, y)

lin_reg.score(X, y) # score = 0.4953707811865009

y_predict = lin_reg.predict(X)

plt.scatter(x, y)

plt.plot(np.sort(x), y_predict[np.argsort(x)], color='r')

plt.show()

使用多项式回归

多项式回归算法

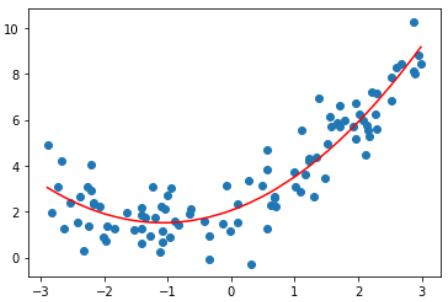

degree = 2的多项式回归

degree取不同值得到的均方误差和拟合结果

Last updated